10 Gbps: digitalising with flexible megawatts

The digital transformation and artificial intelligence demand intelligent, efficient energy management for the energy transition to be truly effective. To achieve this, we need a flexible model, integrated with the grid and compatible with the restrictions of the electricity system: a model capable of accelerating digitalisation without eroding the security of supply. Without manageable energy, digitalisation cannot scale.

By Rafael Sánchez Durán

Digitalisation and AI are almost always measured in connectivity units: fibre, Wi-Fi 7, sensors, the cloud, data models. However, there is a less flashy yet decisive condition that determines whether this modernity takes off or remains on paper: available electrical capacity. Without megawatts, megabits remain a promise that is hard to materialise. Electrical infrastructure is not an operational detail: it is the enabling condition.

When we talk about a Smart Campus, we imagine screens, devices, and massive data flows supported by ‘smart’ street furniture. But the turning point comes when we ask two basic questions: how much power is needed for it to operate continuously, safely, and scalably; and if that power is not available, how long will it take to be? In too many projects, these questions arise late in the game, as if electricity were invisible and infinite. And it isn’t: it is the foundation upon which everything else is built. Asking about megawatts at the end almost always proves costly.

‘Without megawatts, megabits remain a promise that is hard to materialise.’

Let’s consider a medium-sized data centre, around 30 MW of electrical capacity, integrated into a science park, an advanced industrial hub, a port, a university campus, or even a hospital environment. What does that mean in electrical terms? Broadly speaking, a data centre of that size behaves like the sustained consumption of a medium-sized city (≈150,000 inhabitants), powered by one or two grid nodes. And, viewed from the electricity system’s perspective, it is not just ‘concentrated consumption’: it is demand with a systemic impact. It places strain on available capacity, conditions firm capacity, and reduces the margin for new connection requests in its vicinity.

This reality forces us to rethink how we manage the demand of data centres and, by extension, large campuses. We can either continue treating them as passive consumers who merely request power, or understand them as flexible consumers who are also part of the solution. Demand can also be a system asset.

Three ideas for a flexible and active model

What does this shift in perspective entail? It involves three simple but structural ideas that turn a high-demand campus into an active component of the system, rather than just a demanding consumption point. The key is to move from ‘connecting’ to ‘integrating’.

- The first idea is to assume that not all of a campus’s power needs to be contracted as a firm, unconditional service. A portion does: the power supply for critical systems. But there is another manageable fraction: climate control, certain industrial processes, electric vehicle charging, and, in a data centre, non-urgent computing tasks that can be shifted or modulated. This opens the door to contracts that distinguish between firm power and flexible (or conditional) power – that is, power whose reduction the system can request for a few hours a year during moments of congestion or technical restriction. Separating firm from flexible liberates capacity and improves the allocation of the truly scarce resource: the grid.

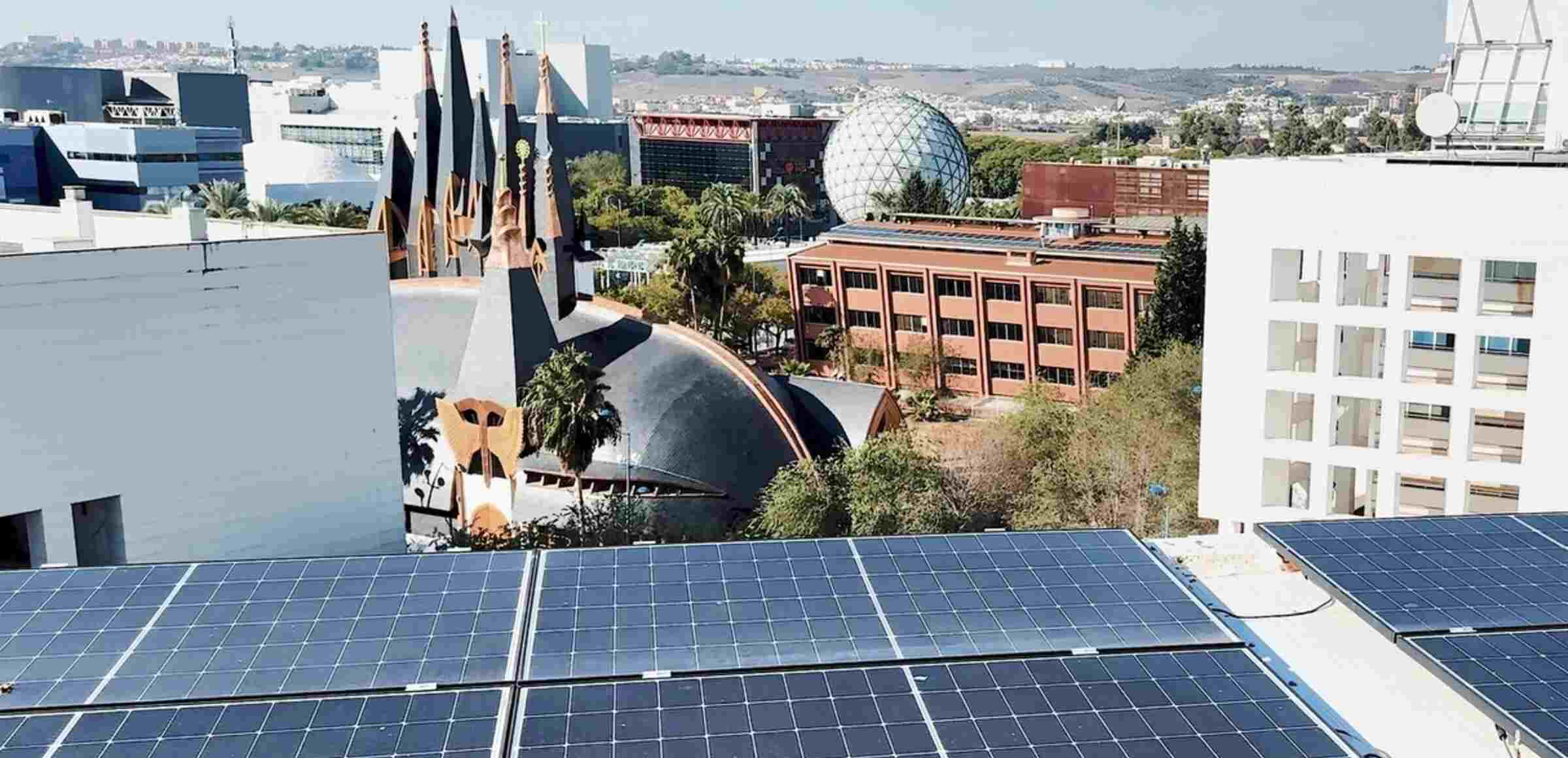

- ·The second idea can be summed up as Bring Your Own Capacity: if you want to connect sooner, it’s not enough to simply ask for a large connection; you must provide part of the capacity you need. This implies sizing your own renewable generation, incorporating dispatchable backup, and adding battery storage with several hours of autonomy. This represents a cultural shift: we must stop treating local generation and storage as a ‘sustainability extra’ and recognise them for what they also are: capacity infrastructure. Self-generation and batteries are no longer accessories; they become part of the supply engineering.

- The third idea is to approach the challenge wearing ‘ICT glasses’, not just from an electrical engineering standpoint. In a modern data centre, critical real-time loads coexist with shiftable loads: heavy analytics, model training, batch processing, simulations, or development tasks. If the operator can pause or delay some of that work for a few hours a year, software is turned into an energy resource: computational flexibility is used to temporarily reduce grid demand without compromising the service. Digital flexibility can translate into electrical flexibility.

When we combine these three pieces, the picture changes completely. The data centre ceases to be a monolithic block and becomes a manageable load. This allows for earlier connections without automatically passing all reinforcement costs onto the grid, while simultaneously better distributing the efforts among the developer, the distributor, and all users. Managing demand means accelerating connections and distributing adaptation costs more fairly.

This approach is already being applied, for example, in the UK, where distributors have shown that it is possible to connect sooner – without waiting to execute all reinforcements – via flexible or non-firm connections. The customer connects with an agreed-upon capacity, but accepts that, during specific moments of congestion or technical limitation, their demand can be automatically modulated using Active Network Management (ANM) systems and DERMS (Distributed Energy Resources Management Systems) platforms. Digital operation de facto enables ‘virtual’ capacity.

A paradigm shift

If we apply this logic to the context of a highly digitalised Smart Campus, we actually already have almost all the pieces: rooftop solar photovoltaics, batteries, backup generators, building management systems, smart meters, and data centres. What is usually missing is a framework that recognises flexibility and a layer of intelligence that makes it operational.

Specifically, two elements are often missing: a framework for interacting with the electricity system, via the distributor, that recognises and, when appropriate, remunerates that flexibility; and an intelligence layer – ideally a digital twin – that coordinates resources in real time with clear rules: when to discharge the battery, when to adjust climate control, when to delay charging a fleet, and when to postpone heavy computation. Without a framework and intelligence, flexibility exists ‘in theory’, but fails to materialise.

‘Flexibility improves system timing, efficiency, and robustness.’

Viewed from an integrated perspective bridging electricity and computing, a 10 Gbps Smart Campus is not just a campus with excellent connectivity: it is an environment where the electrical load curve is understood and managed with the same rigour as the data traffic curve. A Smart Campus learns to manage megawatts the way it manages megabits. And it understands that its 30 MW are not solely a ‘problem’ for the grid, but a lever of flexibility capable of stabilising the system, integrating more renewables, and, simultaneously, accelerating its own digitalisation.

If we continue to treat these large consumers as we did decades ago – solely as firm loads demanding power without any further commitment – it is only logical that grids will saturate sooner and connection times will lengthen. If, on the other hand, we adopt a dual model, we will be able to connect sooner, make better use of assets, and distribute adaptation costs more fairly. Flexibility improves system timing, efficiency, and robustness.

That is the leap at stake: moving from a campus that merely ‘consumes electricity’ to a campus that is an active part of a smart electricity system. That is where the alliance between the energy transition and digital transformation can truly make a difference. Digitalising also means learning to consume differently: more intelligently and more responsibly.